As the 2024 election in the US approaches, trust in government and media is eroding. Regulating Big Tech to make sure they and their algorithms are held accountable is paramount.

America is entering what may be the most high-stakes election year in its history. Donald Trump, a twice-impeached former president, at the center of at least four criminal investigations, is again the likely Republican candidate. And with nearly three billion people worldwide also going out to vote in the next two years, it’s no surprise the World Economic Forum’s (WEF) “Global Risk Report” highlighted the acute threats facing these elections. In a report that could have been published in 2016, the WEF declared “misinformation and disinformation” global Enemy Number 1. Elon Musk, of course, dismissed it as a plot to silence dissenting opinion, and the press and the disinformation studies community largely received it as an endorsement and a further call to double down on the fight against falsehood.

At this critical juncture, we need to train our sights on the real problem. And Enemy Number 1 is not disinformation, it’s the attention economy.

Since 2016, millions of dollars have been invested in worldwide initiatives to respond to disinformation, and still extremist propaganda shows no signs of losing its grip on our lives. Solutions to “disinformation” have repeatedly fallen short: some experts assure us that the public can be educated or inoculated to recognise and reject the ubiquitous falsehoods. Yet when citizens turn to search engines to research false claims, researchers at New York University (NYU) found they often unearth information supporting the conspiracy theory. Or as 404 Media report, they may be presented with AI-generated content. NYU’s team explained that “online search to evaluate the truthfulness of false news articles actually increases the probability of believing them.” Meanwhile, trust in journalism in the US and elsewhere has steadily fallen.

Education is a public good of course. But AI-generated mass deception is no longer science fiction, and today’s rapid technological advances are creating an environment where citizens are simply not equipped to detect online fakery through their own critical capacities – no matter how well-trained they are to recognise rhetorical tricks. Disinformation ubiquity will increase uncertainty and force public reliance on experts to assess online information: government institutions, trust and safety processes, journalists, academics, and verification tools – who will be the frontline for an onslaught of paranoid, data-driven character assassinations designed to create distrust and conspiracy theories that destabilise democratic processes.

Other proposed solutions include forms of (self)regulation that would mean Big Tech enforce trust and safety policies with interventions to label or purge falsehoods and racism from the web, rendering us finally safe. Meanwhile, well-intentioned content moderation of user behaviour, and government efforts to tackle misinformation by alerting platforms about policy violations, can be perceived as censorship and provide fodder to be repurposed into the same conspiratorial frames, turning citizens against once-trusted institutions during a time already marked by distrust and division. Recent rulings have restricted the Biden administration even from communicating with platforms. And platforms have retreated from trust and safety efforts, with Meta, Twitter, and YouTube abandoning 17 policies that guarded against hate and disinformation, and laying off more than 40,000 employees between them, according to Free Press.

Add to this the rise of social media oligarchs like Musk who control major platform datasets and algorithms, and Mark Zuckerberg, whose Facebook turns 20 this week and remains as unaccountable as ever. The consolidation of power in so few hands intensifies the concern highlighted by Zeynep Tufekci, that a biased platform could use social engineering “to model voters and to target voters of a candidate favourable to the economic or other interests of the platform owners” with zero transparency. As researchers at the University of North Carolina (UNC) have noted, the solutions must go beyond journalism and digital literacy to include structural changes. They argue for antitrust laws and reducing monopoly power, and indeed there are important steps forward: the first antitrust suit against a Big Tech company, Google, goes to trial in September. But would this alone solve the problem?

Solutions predicated on a “disinformation” problem will always be inadequate as they do not recognize the higher magnitude threat that the attention economy has created: an infrastructure to enable, incentivise, and profit from campaigns that systematically weaponise anxiety. Even Claire Wardle, author with Hossein Derakhshan of one of the most influential reports that focused the policy and research community on “Misinformation, Disinformation and Malinformation,” has observed, “Repairing the information environment around the election involves more than just ‘tackling disinformation.’”

The frustrating irony of the obsession with mis- and dis- information is that most of this discourse actually refers to conspiracy theories. And the attractive power of conspiracy theories is not in the falsehood of the claims – but in the ideological stories that bind communities into “us” against “them,” and the extremist narratives of good and evil that can offer feelings of certainty in a confusing, threatening world. Conspiracy theories have long been around, but we are seeing a greater impact as algorithms monetise spread by engagement, and our neuroses suddenly become big business and big politics. With new enterprise and large margins, we have witnessed a whole related industry emerge: lawfare creates corruption or election fraud narratives, intelligence firms unearthing “evidence,” “silenced” influencers become deafening, and fundraisers profit off the “Trump on Trial” memes. As a result, threats become violence as the influence industry gets rich. And the situation has never been so urgent. In 2016, the Capitol Police investigated just 902 threats against lawmakers. These have since spiralled – last year it was up to 8,008 cases. Meanwhile, Trump has refused to sign an Illinois loyalty pledge for 2024 candidates promising not to advocate to “overthrow of the government of the United States.” Some propaganda and disinformation experts already fear they have become a lightening rod for spiralling harassment by lawfare, Russian hacks or politically motivated leaks. The conspiracy business is now a tried and tested, sophisticated weapon system for undermining democratic institutions. This surely only benefits profiting industries, extremists, and foreign actors seeking to destabilise the United States and other democracies around the world? And yet, we are still talking about mis- and dis- information.

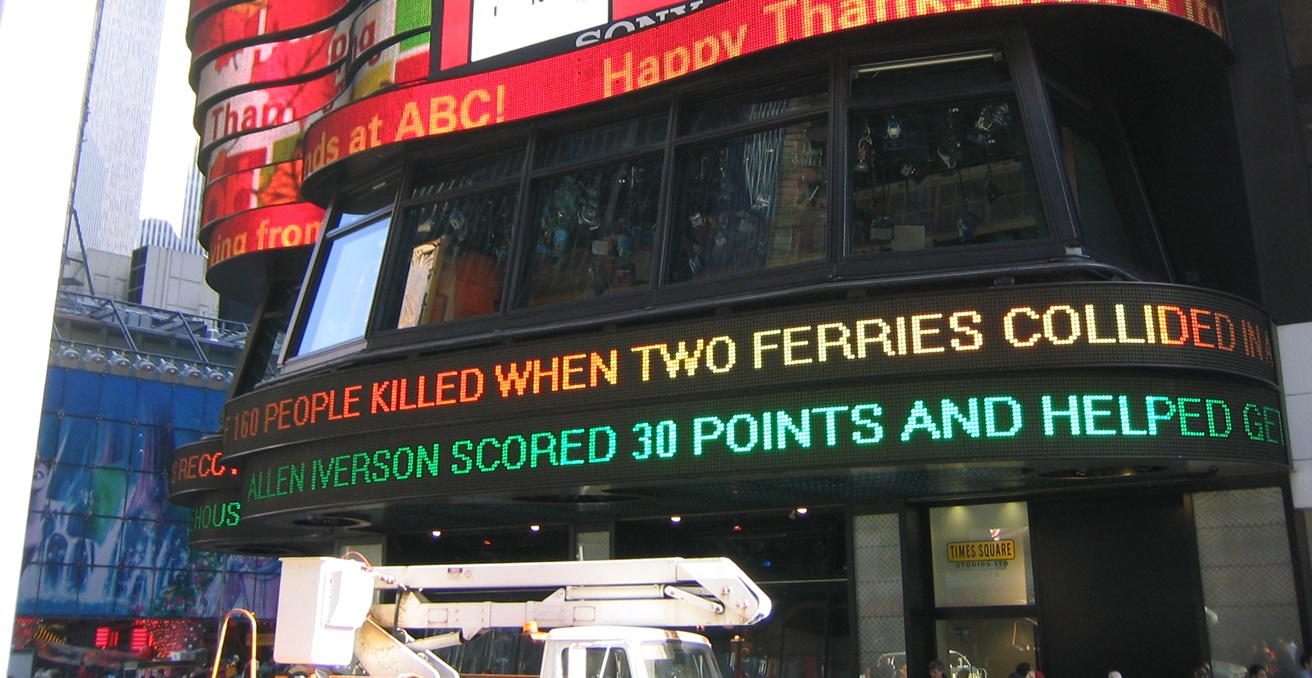

The focus of journalists, researchers, and policymakers must shift to the attention economy. This refers to industries that turn people’s attention to digital products into a highly desirable currency: as users trade behaviour data for services they receive, their attention to games, videos or other content becomes a precious resource that can be sold to advertisers. Social media companies prioritise content that “engages” us, because user attention increases targeted advertising profits, and feeds the insatiable appetite for data that is destroying not only our communities but our planet. Whether it is to fuel gun sales, erase abortion rights, or raise political demagogues – even platforms like Facebook know this model drives extremism. It’s no wonder Big Tech companies have claimed that their recommendations are also protected by the First Amendment. But as Jeffrey Atik and Karl Manheim at Loyola Marymount University have pointed out, legally speaking there is a difference between content posted to a platform and the output of the AI algorithms.

The solution doesn’t have to be “censorship.” The algorithmic social engineering that makes social media so compulsive also limits the free speech reach of users less profitable for the company. From QAnon, to pizzagate and the Twitter Files, to Trump’s “big lie,” social media platform algorithms decide what content is shown and in which order. Recommendation algorithms are not protected speech but are the autonomous actions and “decisions” of machines, calculated to keep us “engaged.” The US first needs comprehensive privacy legislation that is at least as strong as that of Canada (a country which has the toughest fines in the world, making directors liable for reporting breaches). Presently Americans have little control over what is done with their data. Legislation has been slow and, to date, predictably influenced by Big Tech. Industry lobbying on AI has increased 185 percent in a year amid increasing calls for President Joe Biden to codify controls on AI. New regulations should aim to completely decouple the economy of social media from “likes,” “dislikes,” and user engagement to prevent or disrupt the use of audience engagement data for recommendation algorithms or targeted advertising

To be sure, such companies could still make money with advertising, and disinformation would continue to exist. But conspiracy theories and disinformation wouldn’t be driven by virality and commercially incentivised in ways that keep us hooked. And it would disrupt the wider industry expanding to fuel the demand for engaging conspiracy content. Yes, that would upend Big Tech’s business model. But in the next two years democracy will depend on policymakers’ willingness to form a radical response to the digital influence industry’s greed.

Emma Briant is an Associate Professor of News and Political Communication at Monash University. Her research interests include propaganda and information warfare in the digital age.

This article is published under a Creative Commons License and may be republished with attribution.